Disclaimer: No businesses or even the Internet were harmed while researching this post. We will explore how an attacker can control the Internet access of one or more ISPs or countries through ordinary routers and Internet modems.

- Updateable firmware.

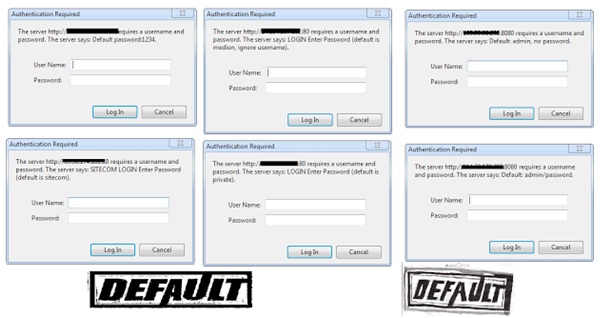

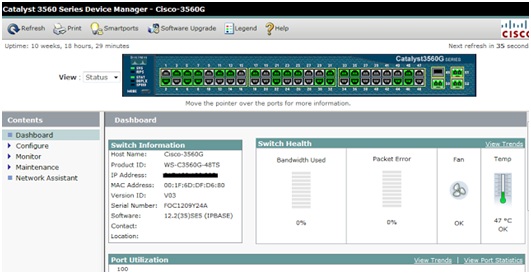

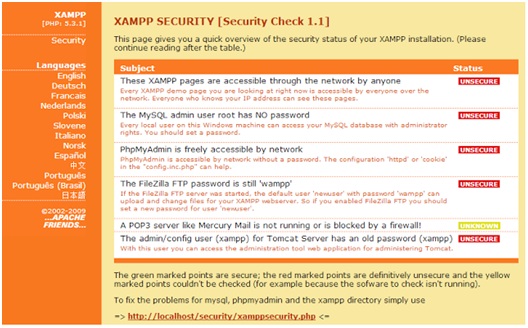

- Default passwords.

- Port forwarding.

- Accessibility over http or telnet.

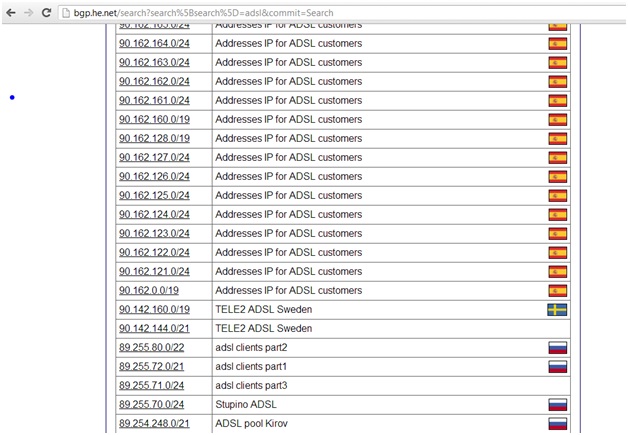

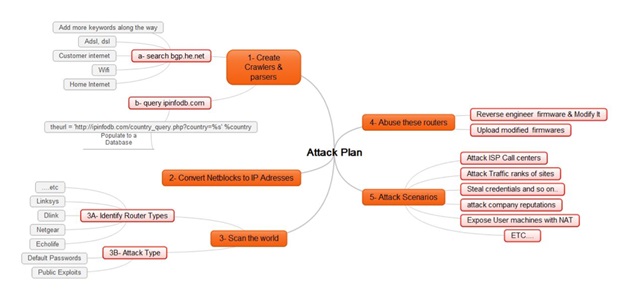

- They can gather a lot of information about netblocks for one or more ISPs and even countries and some information about their use from http://bgp.he.net and http://ipinfodb.com.

- Next, they can use whois or parse bgp.he.net to search for additional information about these netblocks, such as data about ADSL, DSL, Wi-Fi, Internet users, and so on.

- Finally, the attacker can convert the matched netblocks into IP addresses.

- Identified netblocks for an entire ISP or country.

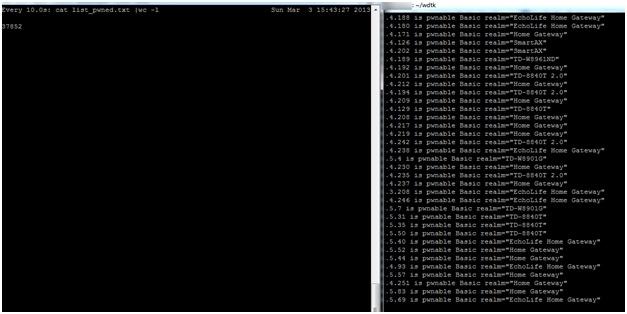

- Pinpointed a lot of ADSL networks, so they have minimized the effort required to scan the entire Internet. With a database gathered and sorted by ISP and country an attacker can, if they wanted to, control a specific ISP or country.

- The router is supported by dd-wrt (http://dd-wrt.com)

- The attacker either works at an ISP or has a friend who works at an ISP and happens to have easy access to assorted firmware.

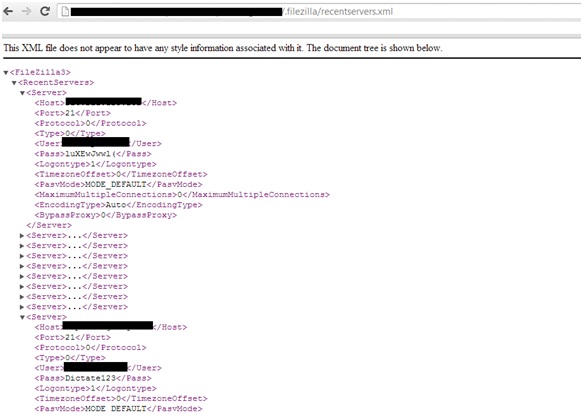

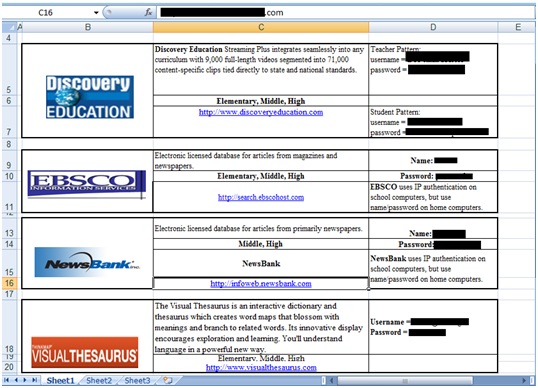

- Search engines and dorks.

- Hardcoded DNS servers.

- New IP table rules that work well on dd-wrt-supported CPEs.

- Remove the Upload New Firmware page.

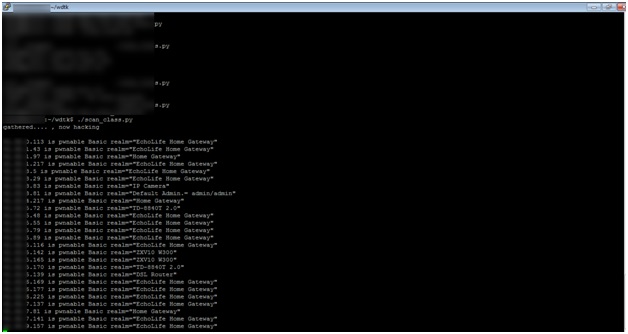

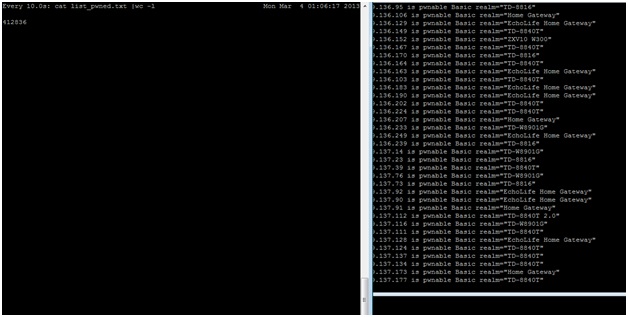

- An attacker gathers a country’s netblocks.

- He filters ADSL networks.

- He reverse engineers and modifies firmware.

- He scans ranges and uploads the modified firmware to targeted CPEs.

- ISP attack: Let’s say an ISP has a large number of IP addresses vulnerable to the CPE compromise attack and an attacker modifies the firmware settings on all the ADSL routers on one or more of the ISP’s netblocks. Most ISP customers are not technical, so when their router is unable to connect to the Internet the first thing they will do is contact the ISP’s Call Center. Will the Call Center be able to handle the sudden spike in the number of calls? How many customers will be left on hold? And what if this happens every day for a week, two weeks or even a month? And if the firmware on these CPEs is unfixable through the Help Desk, they may have to replace all of the damaged CPEs, which becomes an extremely costly affair for the company.

- Controlling traffic: If an attacker controls a huge number of CPEs and their DNS settings, being able to manipulate website traffic rankings will be quite trivial. The attacker can also redirect traffic that was supposed to go to a certain site or search engine to another site or search engine or anywhere else that comes to mind. (And as suggested before, the attacker can shut down the Internet for all of these users for a very long time.)

- Company reputations: An attacker can post:

-

- False news on cloned websites.

- A fake marketing campaign on an organization’s website.

- Make money: An attacker can redirect all traffic from the compromised CPEs to his ads and make money from the resulting impressions. An attacker can also add auto-clickers to his attack to further enhance his revenue potential.

- Exposing machines behind NAT: An attacker can take it a step further by using port forwarding to expose all PCs behind a router, which would further increase the attack’s potential impact from CPEs to the computers connected to those CPEs.

- Launch DDoS attacks: Since the attacker can control traffic from thousands of CPEs to the Internet he can direct large amounts of traffic at a desired victim as part of a DDoS attack.

- Attack ISP service management engines, Radius, and LDAP: Every time a CPE is restarted a new session is requested; if an attacker can harvest enough of an ISP’s CPEs he can cause Radius, LDAP and other ISP services to fail.

- Disconnect a country from the Internet: If a country’s ISPs do not protect against the kind of attack we have described an entire country could be disconnected from the Internet until the problem is resolved.

- Stealing credentials: This is nothing new. If DNS records are totally in the control of an attacker, they can clone a few key social networking or banking sites and from there they could steal all the credentials he or she wants.

- The subscriber turns on his home router or modem, which sends an authentication request to the ISP.

- ISP network devices handle the request and forwards it to Radius to check the authentication data.

- The Radius Server sends Access-Accept or Access-Reject messages back to the network device.

- If the Access-Accept message is valid, DHCP assigns an IP to the subscriber and the subscriber is now able to access the Internet.

- Before the subscriber receives an IP from DHCP the ISP should check the settings on the CPE.

- If the router or modem is using the default settings, the ISP should continue to block the subscriber from accessing the Internet. Instead of allowing access, the ISP should redirect the subscriber to a web page with a message “You May Be At Risk: Consult your manual and update your device or call our help desk to assist you.”

- Another way of doing this on the ISP side is to deny access from the Broadband Remote Access Server (BRAS) routers that are at the customer’s edge; an ACL could deny some incoming ports, but not limited to 80,443,23,21,8000,8080, and so on.

- ISPs on international gateways should deny access to the above ports from the Internet to their ADSL ranges.